Research

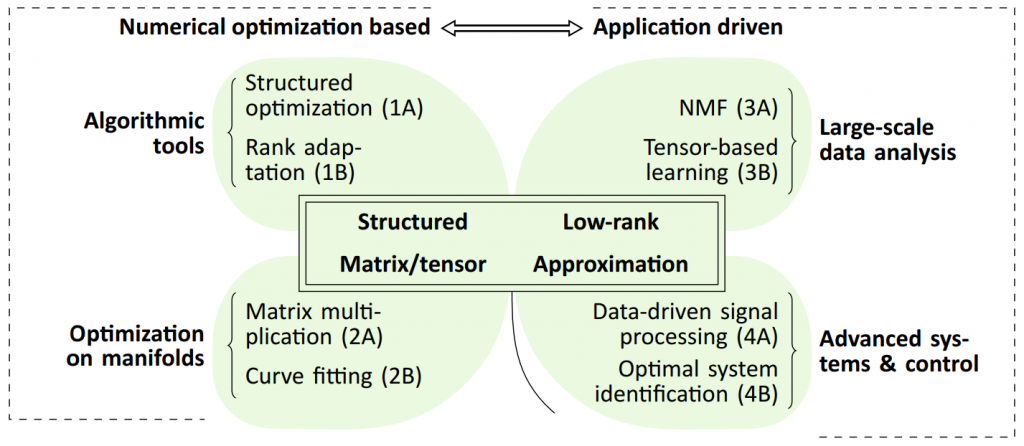

Higher-order tensors are the natural generalizations of vectors (first order) and matrices (second order). They may be viewed as multi-way arrays of numbers. Key to numerous applications involving matrices and/or tensors is the proper exploitation of structure and in particular low rank. This project aims to make a series of outstanding contributions concerning low-rank matrix/tensor approximation based methods, with a strong emphasis on mathematical foundations and numerical properties. The activities are organized in four “leafs”, each consisting of a double work package (WP), as illustrated in the figure. All WPs rely heavily on low-rank matrix/tensor approximation, often in combination with other types of structure. They share similar mathematical and conceptual ingredients but combine them towards different goals.

High-level objectives:

- Development of state-of-the-art algorithmic tools for structured numerical optimization and rank adaptation (WP 1A and 1B).

- Development of advanced numerical algorithms for matrix multiplication and curve fitting using optimization on manifolds (WP 2A and 2B).

- Development of large-scale algorithms for nonnegative matrix factorization (NMF) and development of large-scale algorithms for machine learning which are numerically similar to the matrix singular value decomposition (SVD) (WP 3A and 3B).

- Development of optimal algorithms for linear system identification, model reduction and control (WP 4A and 4B).